I was contacted by a reporter to comment on the apparently radical difference between different seasonal forecasts that are currently available for the upcoming winter here in the northeast. The private company AccuWeather predicts a cold winter for us, while the Climate Prediction Center, a US government facility under the National Oceanic and Atmospheric Administration (NOAA) predicts that a warm winter is more likely. This post is an expanded version of the comments I wrote to him by email.

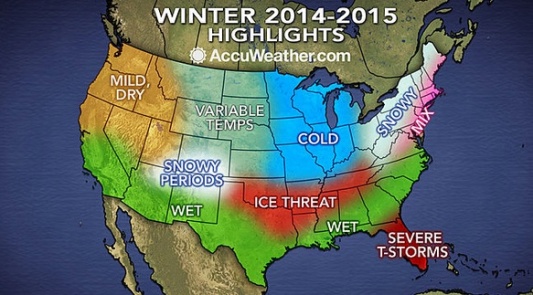

Here is the map showing the current AccuWeather forecast for this winter.

The map is accompanied by an article stating the forecast in words. It begins: “Though parts of the Northeast and mid-Atlantic had a gradual introduction to fall, winter will arrive without delay. Cold air and high snow amounts will define the season.” Those are confident statements, with no expression of uncertainty. The rest of the article is the same.

Here is a salon.com story based on the AccuWeather forecast. The headline is “Bad news, America: The Polar Vortex is coming back!”

Here is a map showing NOAA’s temperature forecast for December through February in graphic form. (Original link here).

It shows warm for the northeast, where AccuWeather showed cold. But I am not really interested in that difference. The more important difference is that NOAA’s map shows probabilities.

The NOAA map states the forecast in terms of the probability that the temperature will be normal, above normal, or below normal. These are defined as terciles, or ranges capturing 1/3 of the historical data – 1/3 of all winters have been in each range. Thus if we had no other information (no current weather data, no forecast models, etc.), we would say there are equal chances of above normal, normal, or below normal – the chance for each would be 33%. Areas where this is the case in NOAA’s judgment are shown as white on the map. Red means the chance of above normal is significantly greater than that for below normal, blue means vice versa. The probabilities for either above or below are nowhere much greater than about 50%, meaning that even where it’s red, for example – meaning warm is more likely – there is still a significant chance of cold. In other words, the forecast is uncertain.

Here is a USA Today article with some statements from NOAA CPC Acting Director Mike Halpert, expressing that uncertainty in words.

The current state of the science is such that seasonal forecasts such as these have only a modest amount of skill, even in the parts of the world where they are the best. That means if you were to bet on them every season for many years, you would make money in the net, but not a lot. The tercile probabilities, with their modest departures from 33%, communicate that.

Further, the eastern US is an area where the forecasts are particularly unskillful. (The west coast, for example, is more strongly influenced by El Nino events such as the one that is trying to get going now, and more predictable as a result of that.)

So a confident forecast that a cold winter (or a warm one) will occur, with no statement of uncertainty or probabilities – such as AccuWeather’s – gives an exaggerated and misleading impression of the degree of certainty that is possible.

The NOAA forecast is truer to the science, in that it is stated in terms of probabilities, and does not express a high degree of confidence in any one outcome. That doesn’t mean it won’t be a cold winter, as AccuWeather says; it might be. It just means there is no way of being anywhere near as certain as their forecast implies.

That said, AccuWeather may be taking their cue from our normal daily weather forecasts (including those from NOAA, of which the National Weather Service is a part). Those too, really, should also be stated in terms of probabilities, but are not. (Actually, they are, for precipitation, e.g., 50% chance of rain, but not for temperature.) So perhaps AccuWeather thinks people are more comfortable with deterministic forecasts, and thus choose to provide deterministic seasonal forecasts as well, even though they know (I have to assume they know) they will be wrong a good fraction of the time. I think that is unwise, given the low skill of seasonal forecasts in particular; it gives the public the wrong idea about the nature of the information they are being given. I believe most people are capable of understanding basic probabilities, and would be better served by forecasts stated in those terms.

I have not addressed why AccuWeather is going cold for the northeast while NOAA is going warm. I don’t know the answer to that. I am pretty sure they have access to most or all of the same information and just interpret it differently. But in my view it would be misleading to focus on this difference. The more important point is that both forecasts are uncertain, and should rightly be expressed in terms of slight changes in the probabilities. NOAA does express it this way, while AccuWeather doesn’t.

Finally: without looking at any weather data or models, one can say pretty confidently that it is very unlikely that this winter will be as cold as last winter was in the eastern US. Last winter was very extreme by historical standards, so a winter that extreme is – basically by definition – improbable in *any* year. No information currently available (including the state of El Nino), or that will be available ahead of time, is strong enough to change that. Again this is a probabilistic statement: it’s not impossible that this winter will be as cold or colder than last, it’s just very unlikely.

Very well put! You might be a little disappointed to learn that NSF funds the deterministic type of climate outlook:

http://www.nsf.gov/news/special_reports/autumnwinter/predicts.jsp

If I understand correctly, the point of the AER/MIT NSF project is to focus on the role of snow cover. Ideally yes, any resulting forecast would be best delivered in probabilistic terms. But NSF funds research, not operations, and perhaps we shouldn’t be too harsh on a project like this whose focus is on one particular source of predictability rather than on producing a forecast optimized for consumption by users (I am guessing, without having read the proposal). I would guess that if this project produces convincing results, some of what they come up with may be incorporated into existing operational centers’ seasonal forecast schemes, including NOAA CPC’s, which are probabilistic.

Hi Adam, I think the thinking for the Accuweather forecast is actually based in the above-referenced work- Siberian snow is pretty high this month, which is correlated with high AO, which is correlated with more central US cold air outbreaks (or so the thinking goes, not sure how good that last link in the chin is).

Hi Dan, you may be right. I have to figure the NOAA people are aware of this line of reasoning as well, but don’t consider it as compelling as AccuWeather does. I have no particularly strong view (yet anyway; haven’t read the papers) about how important the snow influence is. But it seems to me the more important message that should come across in a seasonal forecast, for the northeast especially, is that it’s quite uncertain – pretty much always, and this year seems no exception.

If I am someone running a business that is dependent on seasonal forecast, why would I rely on such highly uncertain forecast that would not benefit the decision making process? I would rather have deterministic information that may not be true all the time.

I believe the deterministic approach, taken by AccuWeather, is driven by clients’ needs and requests even though they understand its latent probabilistic (and risky) nature.

Usama, the forecast is uncertain no matter how we present it. So what you are saying is you know that the information is inherently probabilistic, but turning that into a deterministic forecast makes you better able to make a decision. I suppose that is a matter of human psychology. If it helps you (or some clients of AccuWeather) to take the scientific information and call it something that it is really not, I guess that’s fine, but I don’t understand it.

The NMME (North-American Multimodel Ensemble) monthly and three-monthly long-term outlooks (http://www.cpc.ncep.noaa.gov/products/NMME/) are a good alternative to the complicated CPC outlooks.

NMME gives temperature and precip deviations from climatology, i.e. “real” values, not just probabilities (although there are skill maps based on hindcasts, too). Kirtman et al. (BAMS, April 2014) explain the NMME and compare its skill against CPCs outlooks, which are based on a single climate model (CFSv2) whereas NMME is based on many models and dozens of model variations (although it doesn’t say if the variations are in the initial conditions or in the model physics). To me, NMME forecasts are much better to work with than CPCs forecasts.

That said though, the NMMW largely failed to predict the current cold period in the Northeast, too.

Thanks Toni. Yes the NMME is an important resource. You’re right that using the CFS only is a limitation of CPC’s approach. In other settings, multi-model means seem to outperform single models; my guess is that is true for the NMME seasonal forecasts vs. single models (incl. CFS) as well, but has that been shown explicitly by Kirtman et al.?

At the same time the NMME site does not seem designed for the casual user in that it has a large number of maps for each lead time/variable. One might most want the multi-model mean, but the user is given the whole enchilada all at once, without any filter. CPC has the advantage that they present a single map which is simpler.

I’m not sure at the end of the day that the anomalies in degrees (or whatever) are really more “real” than CPC’s probabilities either. In either case it’s clear that the stronger colors mean stronger signal of whatever sign, and I’m not sure that most users can really use much more information than that, esp. given the uncertainties.

Finally, as a person with a red/green confusion in my eyes (very mild form of color blindness) NMME uses a very difficult scale for me to read, they should take a cue from CPC whose red-blue scale is much more accessible for those of us with this particular handicap!

In other words it seems to me that there is potential for someone to take the NMME outputs and formulate forecast products of various types, some of which might be more user-friendly than what is up on the site now.

Adam, thanks for your reply. I’m slightly red-green colorblind, too, so I share your trouble with the NMME scale. If I’m not sure I take a screenshot and use the color meter on my computer to match legend and map.

Kirtman et al. say they give the individual model maps and the IMME map for researchers to compare (which is particularly interesting now, because some models actually predicted the cold in the NE). But I agree, for the end user this is not helpful.

IRI has a “map room” with a number of (seasonal) forecasts which I think are a little easier to understand, but might still be confusing to the may person: http://ow.ly/JAf7t

Kirtman et al. also do compare NMME against CFS (and all other models) for SST anomalies, precip, and air temperature. CanCM3 came very close for SST anomaly error, but in general NMME outperformed them all.

Concrete values vs. terciles, however, seems to be an advantage for at least some users. I interviewed farmers and ranchers in Oklahoma who found the CPC forecasts very confusing, unreliable, and unspecific, and because of that didn’t use them for their decision-making. At the end the CPC forecasts only tell you the likelihood of exceedance for a three-month period, but they don’t tell you by how much. At the same time, the darker reds and blues are misinterpreted as “warmer” or “colder” compared to lighter reds and greens even by TV forecast meteorologists doing agriculture forecasts here. That’s fatal because it communicates exactly the wrong information.

I think the NMME gives applied researchers much more to work with than CPC does. But I agree, things can be improved a lot. Actually, that’s what my Ph.D. is about. I develop tailored monthly and seasonal forecasts for the ag community in Oklahoma, and I’ll probably use NMME data for that.